The synthesis trap

Open any Slack channel in a product team right now and you will find someone sharing a Notebook LM summary. A ChatGPT synthesis. An AI-moderated research report that took four hours instead of four weeks. The output looks authoritative. The themes are clear. The language is confident.

And most of it is useless for making a real decision.

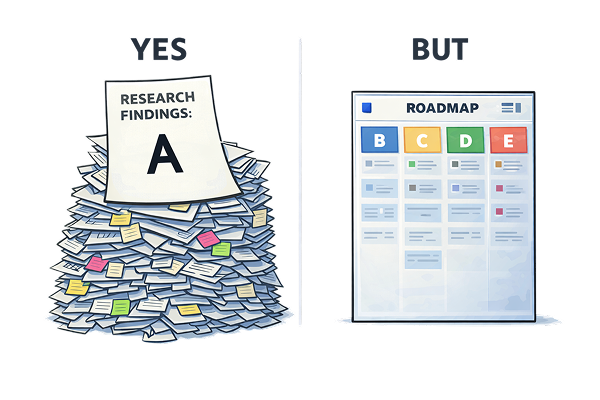

Not because AI is bad at synthesis. It is extraordinary at synthesis. The problem is that synthesis is not insight.

My old boss at KPMG, Steve, was annoyingly precise about this. He would reject entire research decks with a single question: what does this tell people they don't already know? That question changed how I work. It is a brutal filter and it is the right one.

Real insight is counterintuitive. It reframes the problem. It contradicts what you walked in expecting to find. It is not a tidy summary of what your users said; it is the moment where the data corrects your assumptions and you feel it.

AI will give you organised data. It will not give you the adrenaline moment where reality corrects your assumptions. That moment requires a system; one where you know what you are looking for, what would prove you wrong, and how to recognise the anomaly when it surfaces.

This piece is about that system; and about how to make AI genuinely useful inside it.

The system is not the tool

Most people treat AI research tools like a vending machine. Upload the transcripts. Ask a question. Get an answer. Move on. That is not a system. That is pattern-matching on demand, and it will reliably surface what is already obvious.

Before any data touches an AI tool, the scaffolding needs to be in place. Research goal. Personas. Screeners. Discussion guide. And; most importantly; a clear answer to: what would change my mind? If you cannot articulate that before you start, you are not doing research. You are fishing for validation.

With that foundation set, the process works in five steps.

Scaffold before AI. Know who you are talking to, what you are trying to learn, and what a surprising result would look like. The AI's job is to process the data; your job is to frame the question it cannot frame for itself.

Question-by-question interrogation. Do not dump your transcripts and ask for a summary. Go one question at a time. Ask what people said about this specific prompt. Ask what the outliers said. Ask what is missing. Summaries compress signal; line-by-line interrogation preserves it.

Hunt for the unusual. The segment that behaves differently from the others. The response that directly contradicts the pattern. The answer that simply does not appear when it should. These are not noise; they are the data worth pursuing.

Push into correlations. How does this segment compare to that one? Which combinations of attributes produce the outcome? Synthesis tells you what happened on average; correlation tells you who it happened to and under what conditions.

Distill to one or two. If you walk into a meeting with ten insights, you have none. You have a presentation. Force yourself to name the finding that would genuinely change a decision. Everything else is context.

Real insight is the thing that makes the room go quiet for a second because nobody saw it coming. That only happens if you were specifically looking for it.

The confidence gauge

A few months ago at Compare Club, my senior designer Jesse and I disagreed about a UI control. It was a confidence score; a gauge that tells members how well their current insurance policy stacks up against the market.

The disagreement was about colour. I wanted red at the low end of the gauge; the urgency signal, the prompt to act. Jesse wanted green. Her logic was that users scanning quickly would read green as safety and red as danger; which is the opposite of what we wanted if their score was genuinely poor.

Both positions had internal logic. Neither of us could resolve it from first principles. So we went to research.

The tools did the heavy lifting: Gemini for transcript processing, Notebook LM for cross-session synthesis, ChatGPT for segment comparison. Fast, clean, well-organised output. On the surface, Jesse was right; a slim majority of respondents () read the gauge her way, while a substantial minority () read it mine. Case closed, or so it seemed.

But then we looked at the overall preference across all four versions of the control.

People were not reading the gauge. They were guessing. One respondent wrote that "green highlighted all the way means everything is done." Another admitted they were "basically just judging based on visual aesthetic." The qualitative signal was clear before we even touched the numbers: the control was not communicating what we thought it was.

The numbers confirmed it. When we asked respondents which version they would actually want on their account, no option commanded a majority. The preference split four ways (, , , ) across respondents; the top two separated by a single percentage point. It was not a close race between two options. It was a four-way deadlock.

That was the insight; and neither of us had been looking for it. We had framed the research as a debate between two colour directions. The data refused to participate in that framing. What it actually showed was that the control itself was fundamentally ambiguous; not in a way that split cleanly along demographics or insurance literacy, but in a way that was essentially random.

We did not ship Jesse's version. We did not ship mine. We redesigned the control entirely, adding explicit labels so the gauge could not be misread regardless of which mental model a user arrived with.

The tools surfaced the split. But the moment where we looked at that four-way deadlock and said wait, this is telling us something different entirely; that was human. That was the last mile. The part no vending machine reaches.

Where the system breaks

Finding the insight is not the hard part. I am reasonably good at that.

Communicating it is where I consistently fail.

The trap is subtle. You have lived inside the data for days. You know what it shows. You are confident it is right. So you present it as look how right this is; a demonstration of rigour; instead of here is why this changes what you are about to do. A stakeholder does not care about clean crosstabs. They care about one question: what does this mean for the decision in front of me right now?

I learned this the expensive way at Compare Club.

Jesse and I ran a study on bill upload methods. We tested everything: uploading directly through the app, emailing a bill to a dedicated address, using the iPhone or Android share sheet, scanning with the camera, and connecting your email account directly so the platform could detect bills automatically. The business had a clear favourite. Email connection. Not just because it was convenient for users; it also gave Compare Club commercial access to the inbox, which had real product value downstream.

The research was thorough. The data told a story the business was not expecting.

Half the respondents () identified manual upload as their preferred and most trusted method; not the automated option, not the clever one. More than a third () used their current submission method simply because it was the only option available; not out of preference. When asked about security and clarity, respondents rated them near the top of the scale (); they had a high tolerance for extra steps if those steps guaranteed both. And roughly a quarter () stuck with what they had used before; a level of risk aversion that made the "convenience" pitch feel increasingly thin.

The pattern was clear: users prioritised reliability and predictability over novelty or convenience. Document submission was perceived as high-stakes. A process that seemed "too fast" actually felt suspicious; users expected the system to demonstrate it was handling their data safely, even if it took longer.

And yes; users liked the idea of email connection. It felt effortless. It felt smart. And they would absolutely use it; once they trusted the product enough to hand over access to their inbox.

That last sentence was the insight. Trust was a prerequisite, not a byproduct. Compare Club had not yet earned it.

We delivered the finding. The business heard "users like email connection" and shipped it anyway.

The prerequisite got buried somewhere between the readout and the roadmap.

We framed the insight as a preference when we should have framed it as a blocker. The difference is not cosmetic.

What we said: "Users prefer email connection; it feels convenient and effortless."

What we should have said: "This feature depends on a trust handshake that does not exist yet. Shipping without it means low adoption and no way to diagnose why; because the data will read as 'users don't want this' when the actual problem is 'users don't trust us enough yet.' These are different problems with different solutions."

One sentence gets noted and filed. The other reframes the decision.

AI makes this failure mode worse, not better. The outputs it generates look complete. They read like research. They do not read like decisions. A well-formatted AI summary is optimised for comprehensiveness, not for consequence. It will tell you what users said; it will not tell you what your business needs to hear.

Before you present, write one sentence: what does this mean for the decision you are about to make? If you cannot write that sentence cleanly, you have not finished the analysis. The reframe is not a presentation skill. It is a research skill.

The reckoning

For two decades, companies were built around throughput. Hire operators. Reward speed. Measure output. That made sense when execution was expensive.

A competent human could do in a day what a slow process took a week to produce. Speed was a genuine differentiator. Organisations scaled by adding more people who could execute faster.

That logic no longer holds.

AI executes faster than any operator you can hire. It does not get tired. It does not need onboarding. It does not ask for a performance review. The work that used to require three analysts and a project manager now requires a prompt and twenty minutes.

The operators being let go now are not leaving because they failed. They are leaving because the job they were hired to do no longer requires a human. That is a different kind of displacement; harder to argue with, harder to prepare for, harder to explain to someone who spent a decade getting good at something that is now table stakes for a language model.

But here is what AI cannot do:

Know which problem is worth solving in the first place

Structure research to find the unexpected; the thing you were not looking for

Interrogate an output that looks right but smells wrong

Translate an insight into a decision someone else will actually act on

Everything upstream of execution still requires a human. Not because humans are sentimental about it, but because those tasks require judgement shaped by context, consequence, and years of watching what actually happens when organisations make decisions based on bad framing.

The companies that survive this are not the ones who adopt AI fastest. They are the ones who realise what AI cannot replace and invest there deliberately.

The insight was always the point. Execution just used to be expensive enough to distract everyone from that.

If you lead a research team, this is your window. Not to celebrate the disruption; but to move upstream before someone else redraws the map without you. Research that produces decisions, not decks. Synthesis that changes behaviour, not just informs it.

Start here

Three tiers. Each with something concrete you can do this week.

If you lead a team

Audit what your team actually does. Not what the job description says; what fills the hours. How much of it is throughput AI could handle? That number will be uncomfortable. It should be.

Reframe one active project as a decision problem. Not "what do users think about X" but "what do we need to know to decide whether to build X, and what would change our answer?"

Ask your researchers to walk you through their system, not their tools. The tools are irrelevant. The thinking is not. If they cannot describe how they moved from data to decision, that is the gap.

If you are a practitioner

Write "what would prove me wrong" before your next project starts. Not after you have a hypothesis; before. This is the single fastest way to stop collecting evidence for what you already believe.

Go question by question through your research guide. For each one: what decision does the answer to this question affect? If you cannot name the decision, cut the question.

Write the reframe sentence before you present. One sentence: what does this mean for the decision your audience is about to make? If you cannot write it, you are not done.

If you are early in your career

Run a mock study this week. Pick a product you use. Define a research goal. Ask AI to behave like a participant; give it a persona, a context, a frustration. Interrogate the outputs. Ask follow-up questions. Then ask yourself what the AI could not tell you, and why. That gap is where your value lives.

Build a decision log. For every project: what was the question, what did the research say, what decision did it affect, what actually happened. Six months of that log is worth more than most portfolios.

The entry-level role in research was always about doing: recruiting, note-taking, synthesis support. That role is being automated. The role that replaces it is about thinking; structuring questions, interrogating outputs, translating findings into decisions people act on.

That role is not out of reach for someone early in their career. But you have to start building it now, before everyone else figures out that is what the job requires.